|

Visual Servoing Platform

version 3.6.1 under development (2025-02-18)

|

|

Visual Servoing Platform

version 3.6.1 under development (2025-02-18)

|

This tutorial focuses on visual servoing simulation on a unicycle robot. The study case is a Pioneer P3-DX mobile robot equipped with a camera.

We suppose here that you have at least followed the Tutorial: Image-based visual servo that may help to understand this tutorial.

Note that all the material (source code) described in this tutorial is part of ViSP source code (in tutorial/robot/pioneer folder) and could be found in https://github.com/lagadic/visp/tree/master/tutorial/robot/pioneer.

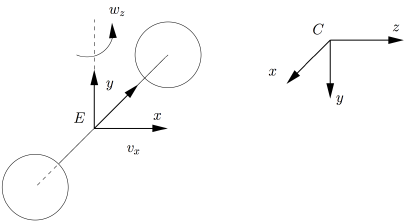

In this section we consider the following unicycle:

This robot has 2 dof: ![]() , the translational and rotational velocities that are applied at point E, considered as the end-effector. A camera is rigidly attached to the robot at point C. The homogeneous transformation between C and E is given by

, the translational and rotational velocities that are applied at point E, considered as the end-effector. A camera is rigidly attached to the robot at point C. The homogeneous transformation between C and E is given by cMe. This transformation is constant.

The robot position evolves with respect to a world frame; wMe. When a new joint velocity is applied to the robot using setVelocity(), the position of the camera wrt the world frame is also updated; wMc.

To control the robot by visual servoing we need to introduce two visual features. If we consider a 3D point at position O as the target, to position the robot relative to the target we can consider the coordinate  of the point in the image plane and

of the point in the image plane and ![]() , with

, with ![]() the distance of point in the camera frame, as visual features. The first feature implemented in vpFeaturePoint allows to control

the distance of point in the camera frame, as visual features. The first feature implemented in vpFeaturePoint allows to control ![]() , while the second one implemented in vpFeatureDepth

, while the second one implemented in vpFeatureDepth ![]() . The position of the target in the world frame is given by

. The position of the target in the world frame is given by wMo transformation. Thus the current visual feature ![]() and the desired feature

and the desired feature ![]() .

.

The code that does the simulation is provided in tutorial-simu-pioneer.cpp and given hereafter.

We provide now a line by line explanation of the code.

Firstly we define cdMo the desired position the camera has to reach wrt the target. ![]() should be different from zero to be non singular. The camera has to keep a distance of 0.5 meter from the target.

should be different from zero to be non singular. The camera has to keep a distance of 0.5 meter from the target.

Secondly we specify cMo the initial position of the camera wrt the target.

Thirdly by introducing our simulated robot we can compute the position of the target wMo and of the camera wMc wrt the world frame.

Once all the frames are defined, we define a 3D point and its coordinates (0,0,0) in the object frame as the target.

We compute then its coordinates in the camera frame.

A visual servo task is then instantiated.

With the next line, we specify the king of visual servoing control law that will be used to control our mobile robot. Since the camera is mounted on the robot, we consider the case of an eye-in-hand visual servo. The robot controller provided in vpSimulatorPioneer allows to send ![]() velocities. This controller implements also the robot jacobian

velocities. This controller implements also the robot jacobian ![]() that links the end-effector velocity skew vector

that links the end-effector velocity skew vector ![]() to the control velocities

to the control velocities ![]() . The also provided velocity twist matrix

. The also provided velocity twist matrix ![]() allows to transform a velocity skew vector expressed in the end-effector frame in the camera frame.

allows to transform a velocity skew vector expressed in the end-effector frame in the camera frame.

We specify then that the interaction matrix ![]() is computed from the visual features at the desired position. The constant gain that allows an exponential decrease of the features error is set to 0.2.

is computed from the visual features at the desired position. The constant gain that allows an exponential decrease of the features error is set to 0.2.

To resume, with the previous line, the following control law will be used:

![]()

From the robot position we retrieve the velocity twist transformation ![]() that is then re-injected to the task.

that is then re-injected to the task.

We do the same with the robot jacobian ![]() .

.

Let us now consider the visual features. We first instantiate the current and desired position of the 3D target point as a visual feature point.

The current visual feature is directly computed from the perspective projection of the point position in the camera frame.

The desired position of the feature is set to (0,0). The depth of the point cdMo[2][3] is required to compute the feature position.

Finally only the position of the feature along x is added to the task.

We consider now the second visual feature ![]() that corresponds to the depth of the point. The current and desired features are instantiated with:

that corresponds to the depth of the point. The current and desired features are instantiated with:

Then, we get the current Z and desired Zd depth of the target.

From these values, we are able to initialize the current depth feature:

and also the desired one:

Finally, we add the feature to the task:

Then comes the material used to plot in real-time the curves that shows the evolution of the velocities, the visual error and the estimation of the depth. The corresponding lines are not explained in this tutorial, but should be easily understand by reading Tutorial: Real-time curves plotter tool.

In the visual servo loop we retrieve the robot position and compute the new position of the camera wrt the target:

We compute the coordinates of the point in the new camera frame:

Based on these new coordinates, we update the point visual feature s_x:

and also the depth visual feature:

We also update the task with the values of the velocity twist matrix cVe and the robot jacobian eJe:

After all these updates, we are able to compute the control law:

Computed velocities are send to the robot:

At the end, we stop the infinite loop when the visual error reaches a value that is considered as small enough:

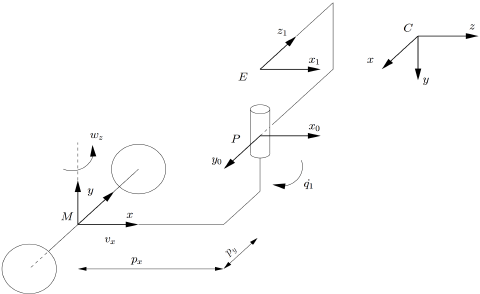

In this section we consider the following unicycle:

This robot has 3 dof: ![]() , as previously the translational and rotational velocities that are applied here at point M, and

, as previously the translational and rotational velocities that are applied here at point M, and ![]() the pan of the head. The position of the end-effector E depends on

the pan of the head. The position of the end-effector E depends on ![]() position. The camera at point C is attached to the robot at point E. The homogeneous transformation between C and E is given by

position. The camera at point C is attached to the robot at point E. The homogeneous transformation between C and E is given by cMe. This transformation is constant.

If we consider the same visual features than previously ![]() and the desired feature

and the desired feature ![]() , we are able to simulate this new robot simply by replacing vpSimulatorPioneer by vpSimulatorPioneerPan. The code is available in tutorial-simu-pioneer-pan.cpp.

, we are able to simulate this new robot simply by replacing vpSimulatorPioneer by vpSimulatorPioneerPan. The code is available in tutorial-simu-pioneer-pan.cpp.

You can just notice here that we compute the control law using the current interaction matrix; the one computed with the current visual feature values.

The following control law is used:

![]()

You are now ready to see the next Tutorial: How to boost your visual servo control law.