|

Visual Servoing Platform

version 3.6.1 under development (2025-02-28)

|

|

Visual Servoing Platform

version 3.6.1 under development (2025-02-28)

|

"ViSP for iOS" either after following Tutorial: Installation from prebuilt packages for iOS devices or Tutorial: Installation from source for iOS devices. Following one of these tutorials allows to exploit visp3.framework and opencv2.framework to build an application for iOS devices.In this tutorial we suppose that you install visp3.framework in a folder named <framework_dir>/ios. If <framework_dir> corresponds to $HOME/framework, you should get the following:

$ ls $HOME/framework/ios opencv2.framework visp3.framework

Note that all the material (source code and Xcode project) described in this tutorial is part of ViSP source code (in tutorial/ios/GettingStarted folder) and could be found in https://github.com/lagadic/visp/tree/master/tutorial/ios/GettingStarted.

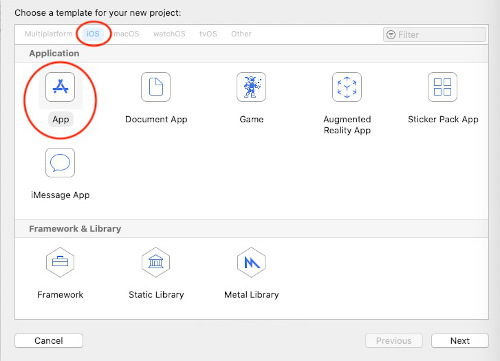

"File > New > Project" menu and create a new iOS "App"

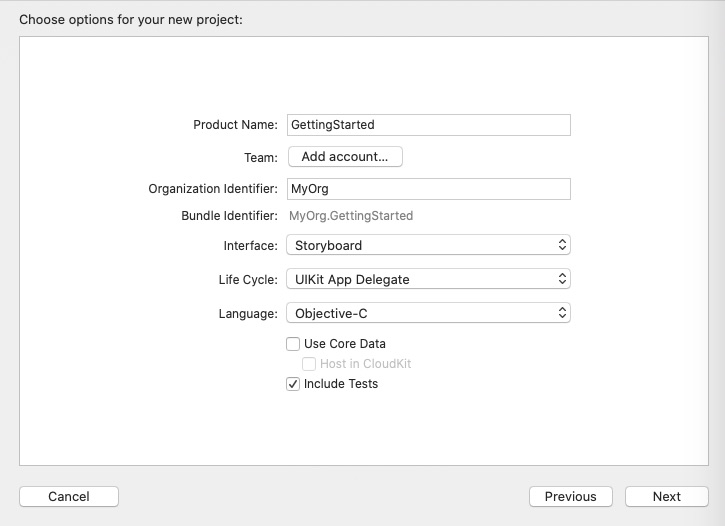

"Next" button and complete the options for your new project:

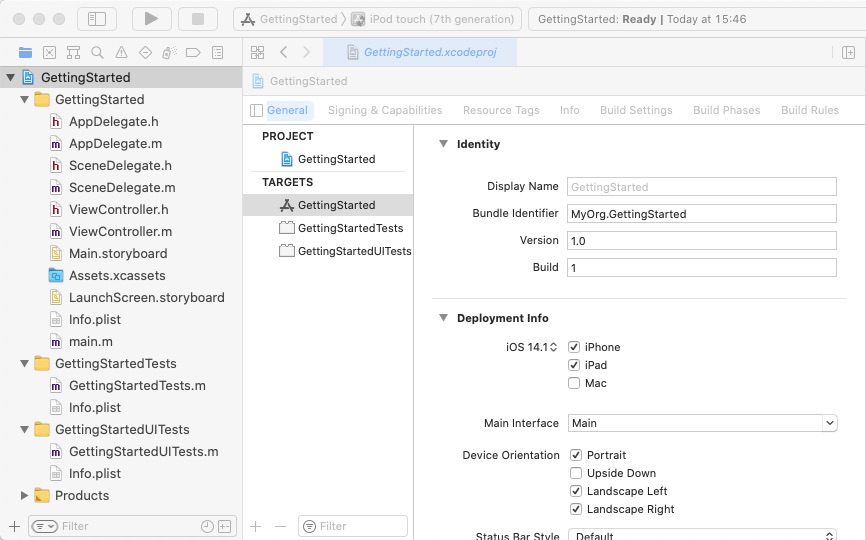

"Next" button and select the folder where the new project will be saved. Once done click on "Create". Now you should have something similar to:

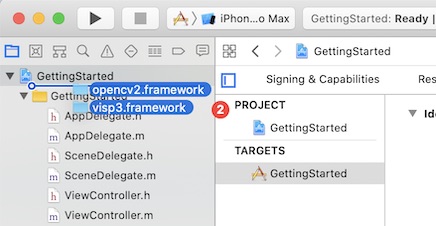

Now we need to link visp3.framework with the Xcode project.

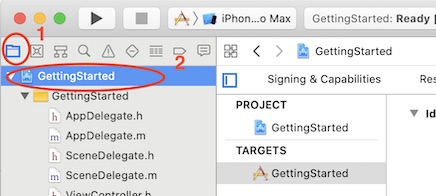

"Getting Started" (2)

<framework_dir>/ios folder in the left hand panel containing all the project files.

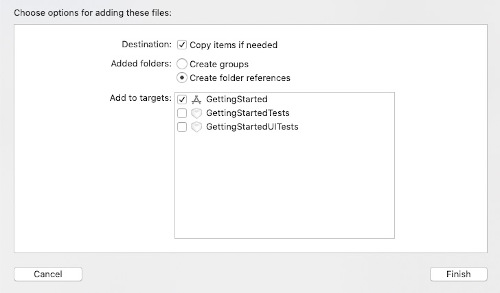

"Copy item if needed" to ease visp3.framework and opencv2.framework headers location addition to the build options

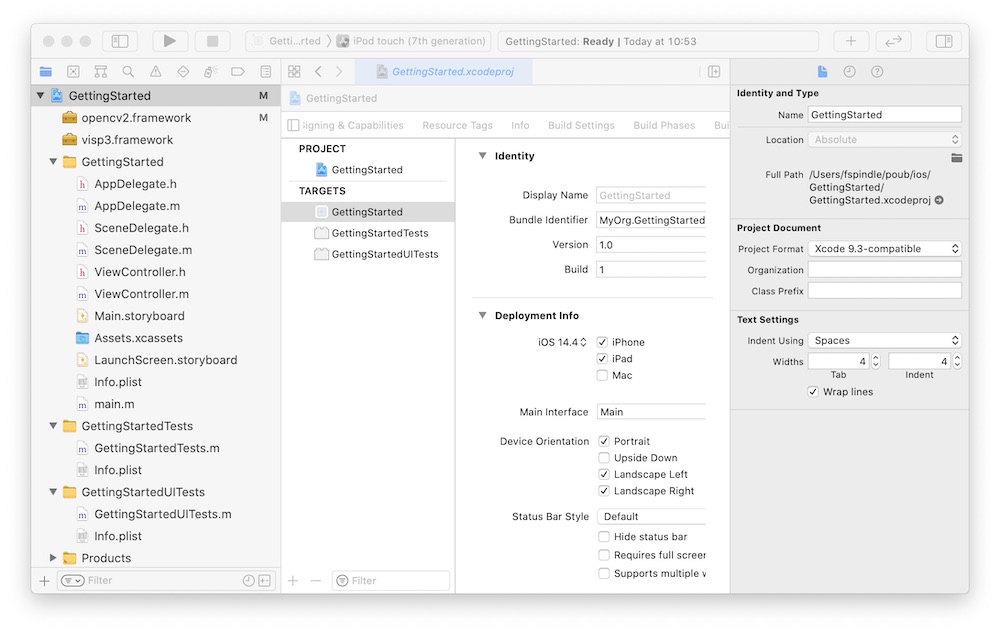

"Finish". You should now get something similar to the following image

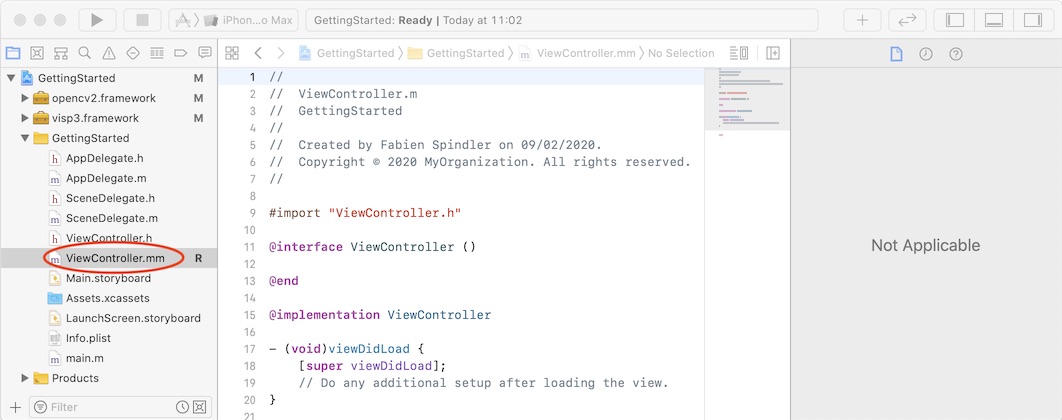

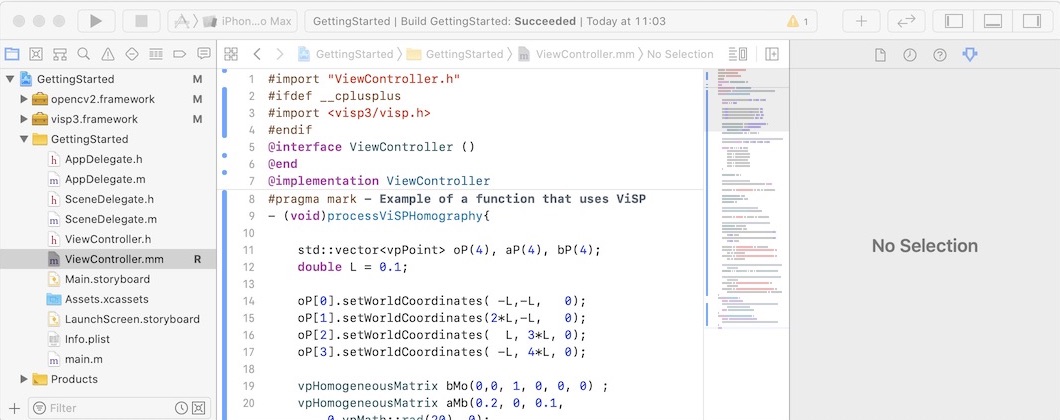

ViewController.m file into ViewController.mm

$VISP_WS/visp/tutorial/ios/GettingStarted/GettingStarted/ViewController.mm file content into ViewController.mm. Note that this Objective-C code is inspired from tutorial-homography-from-points.cpp. processViSPHomography(). This function is finally called in viewDibLoad().

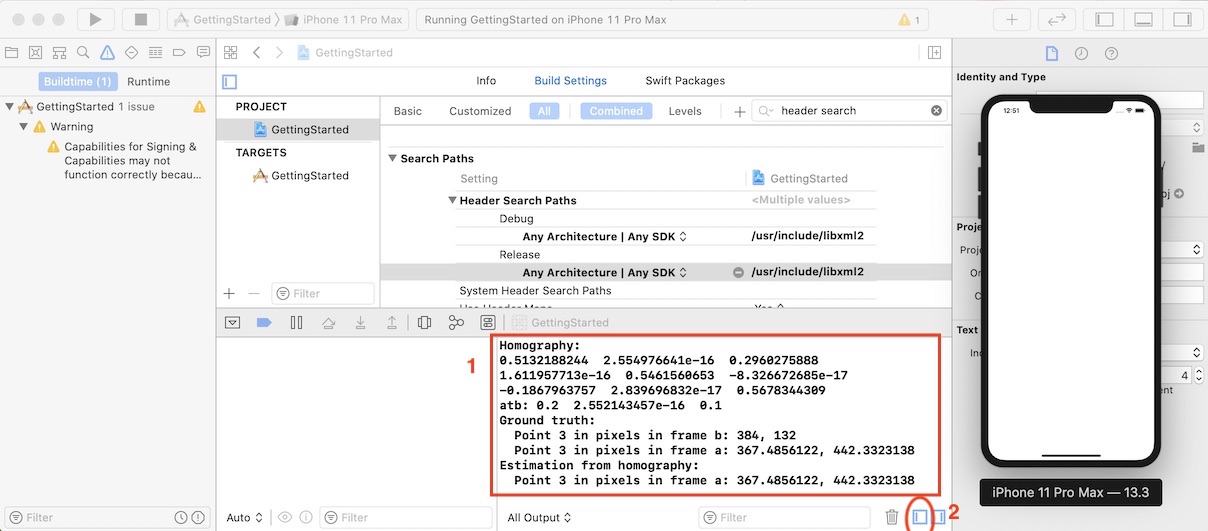

"Getting Started" application using Xcode "Product > Build" menu. "Product > Run" menu (Simulator or device does not bother because we are just executing code). You should obtain these logs showing that visp code was correctly executed by your iOS project.

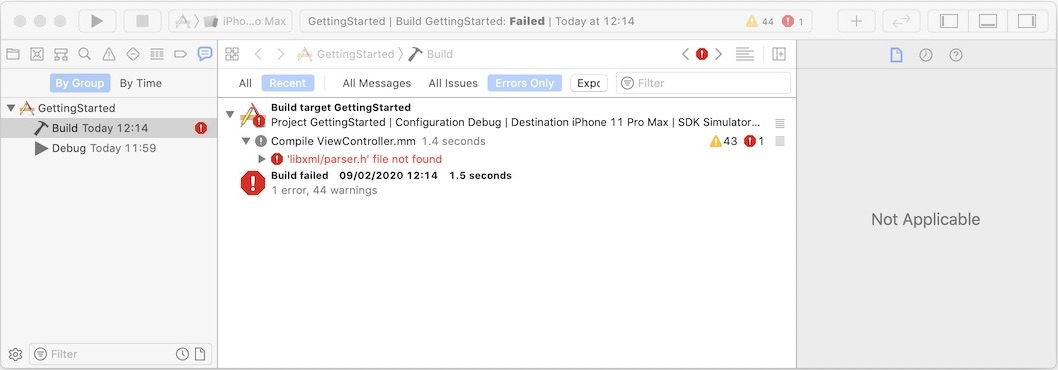

All Output.If you encounter the following issue iOS error: libxml/parser.h not found

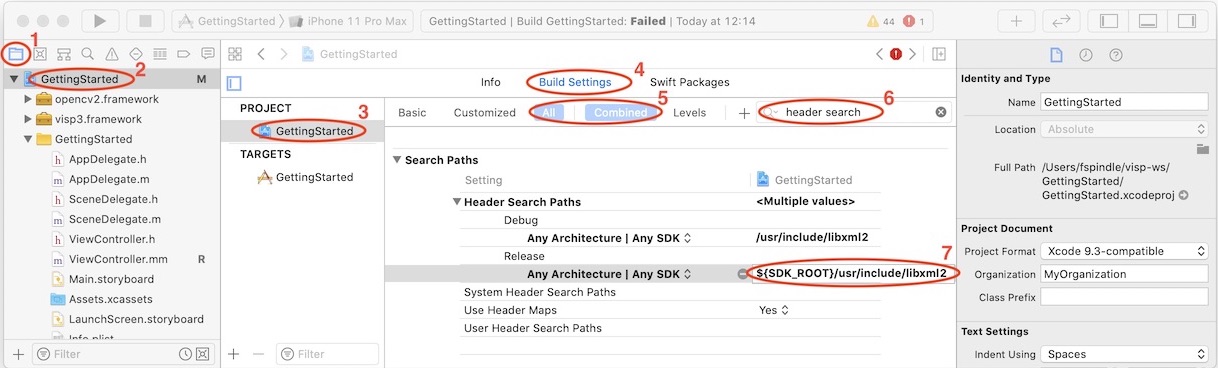

the solution to this problem is to add libxml path. To this end, as shown in the next image:

"Build Settings" panel (4)"All" and "Combined" settings view (5)"header search" in the search tool bar (6)"Header Search Paths" press + button for "Debug" and "Release" configurations and add a new line (7): You are now ready to see the Tutorial: Image processing on iOS.