|

ViSP

2.10.0

|

|

ViSP

2.10.0

|

With ViSP you can track a blob using either vpDot or vpDot2 classes. By blob we mean a region of the image that has the same gray level. The blob can be white on a black background, or black on a white background.

In this tutorial we focus on vpDot2 class that provides more functionalities than vpDot class. As presented in section Blob auto detection and tracking, it allows especially to automize the detection of blobs that have the same characteristics than a reference blob.

The next videos show the result of ViSP blob tracker on two different objects:

In the next subsections we explain how to achieve this kind of tracking, first using a firewire live camera, then using a v4l2 live camera that can be an usb camera, or a Raspberry Pi camera module.

The following code also available in tutorial-blob-tracker-live-firewire.cpp file provided in ViSP source code tree allows to grab images from a firewire camera and track a blob. The initialisation is done with a user mouse click on a pixel that belongs to the blob.

To acquire images from a firewire camera we use vp1394TwoGrabber class on unix-like systems or vp1394CMUGrabber class under Windows. These classes are described in the Tutorial: Image frame grabbing.

From now, we assume that you have successfully followed the Tutorial: How to create and build a CMake project that uses ViSP on Unix or Windows and the Tutorial: Image frame grabbing. Here after we explain the new lines that are introduced.

Then we are modifying some default settings to allow drawings in overlay the contours pixels and the position of the center of gravity with a thickness of 2 pixels.

Then we are waiting for a user initialization throw a mouse click event in the blob to track.

The tracker is now initialized. The tracking can be performed on new images:

The following code also available in tutorial-blob-tracker-live-v4l2.cpp file provided in ViSP source code tree allows to grab images from a camera compatible with video for linux two driver (v4l2) and track a blob. Webcams or more generally USB cameras, but also the Raspberry Pi Camera Module can be considered.

To acquire images from a v4l2 camera we use vpV4l2Grabber class on unix-like systems. This class is described in the Tutorial: Image frame grabbing.

The code is the same than the one presented in the previous subsection, except that here we use the vpV4l2Grabber class to grab images from usb cameras. Here we have also modified the while loop in order to catch an exception when the tracker fail:

If possible, it allows the tracker to overcome a previous tracking failure (due to blur, blob outside the image,...) on the next available images.

The following example also available in tutorial-blob-auto-tracker.cpp file provided in ViSP source code tree shows how to detect blobs in the first image and then track all the detected blobs. This functionality is only available with vpDot2 class. Here we consider an image that is provided in ViSP source tree.

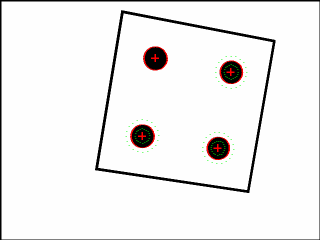

Here is a screen shot of the resulting program :

And here is the detailed explanation of the source :

First we create an instance of the tracker.

Then, two cases are handled. The first case, when learn is set to true, consists in learning the blob characteristics. The user has to click in a blob that serves as reference blob. The size, area, gray level min and max, and some precision parameters will than be used to search similar blobs in the whole image.

If you have an precise idea of the dimensions of the blob to search, the second case consists is settings the reference characteristics directly.

Once the blob characteristics are known, to search similar blobs in the image is simply done by:

Here blob_list contains the list of the blobs that are detected in the image I. When learning is enabled, the blob that is tracked is not in the list of auto detected blobs. We add it to the end of the list:

Finally, when a new image is available we do the tracking of all the blobs:

You are now ready to see the next Tutorial: Keypoint tracking.